What Is Psychoacoustics in Audio? Complete Guide

When I first started learning about audio, I realized that sound is not just about volume and frequency. It is also about how our brain understands what we hear. Psychoacoustics is the study of how humans perceive sound. It explains why two sounds with the same level can feel different.

This topic matters to me because modern audio systems depend on it. Music streaming, podcast platforms, and sound design all use psychoacoustic principles. For example, platforms like SoundCloud compress audio based on how people actually hear. As a result, file sizes stay small while the listening experience remains clear.

I wrote this article for creators, students, and audio learners. If you upload music, edit podcasts, or stream tracks online, understanding psychoacoustics will help you make better decisions.

What Is Psychoacoustics in Audio?

I understand psychoacoustics as the bridge between sound waves and human perception. It is a branch of audio science that studies how the brain processes what the ear receives. The ear detects vibrations, but the brain creates meaning from them.

In simple words, psychoacoustics in audio explains how we perceive pitch, loudness, tone, and space. I use this knowledge when thinking about compression, mixing, and streaming systems.

I have seen that two sounds can measure the same in decibels yet feel different. This happens because human hearing is not linear. Our ears are more sensitive to mid-range frequencies.

Psychoacoustics also explains masking and spatial hearing. Therefore, I consider it essential for both music production and digital streaming.

Why It Matters

In my experience, psychoacoustics improves audio efficiency. It allows smaller file sizes without major quality loss. This is especially important for mobile streaming.

I also see its value in mixing and mastering. Producers adjust frequencies based on how listeners perceive them. In contrast, ignoring perception often leads to muddy mixes.

For platforms that host millions of tracks, storage and bandwidth are critical. Smart compression reduces data usage. Therefore, both creators and listeners benefit.

How It Works

From my understanding, sound travels as waves through the air. The ear converts these waves into electrical signals. The brain then interprets pitch, tone, and direction.

One concept I often focus on is frequency masking. A loud sound can hide a softer sound that sits close in frequency. For example, a heavy bass line can mask quiet vocals.

Temporal masking is another key idea. A loud sound can hide softer sounds that occur just before or after it. This principle is widely used in MP3 and AAC compression. These masking principles are heavily used in modern codecs. I explain the full audio compression process in a separate technical guide.

Key Concepts and Data

From my research, human hearing typically ranges from 20 Hz to 20,000 Hz in young adults. However, sensitivity peaks between 2,000 Hz and 5,000 Hz. This is the range where speech clarity is strongest.

The equal-loudness contour shows that bass frequencies need more energy to feel as loud as mid-range tones. This is based on standardized equal-loudness curves (ISO 226). Therefore, low frequencies often require a higher amplitude in a mix.

Critical Bands and the Bark Scale

I find the concept of critical bands very important. The ear groups frequencies into about 24 critical bands. These bands form what is known as the Bark scale.

Sounds inside the same band are more likely to mask each other. Compression systems use this principle to remove masked sounds with minimal perceived impact.

Loudness vs Sound Pressure Level

Sound pressure level is measured in decibels (dB SPL). This is the physical measurement of sound energy. However, perceived loudness is different.

Perceived loudness is measured in phons or sones. Two sounds with the same dB value may not feel equally loud. Equal-loudness curves explain this difference.

Core Psychoacoustic Concepts

| Concept | Description | Practical Use |

| Frequency Masking | Loud sound hides nearby frequency | Audio compression |

| Temporal Masking | Loud sound hides close-time sounds | MP3 encoding |

| Equal-Loudness Curve | Ear sensitivity varies by frequency | Mixing decisions |

| Binaural Hearing | Two-ear spatial perception | Stereo design |

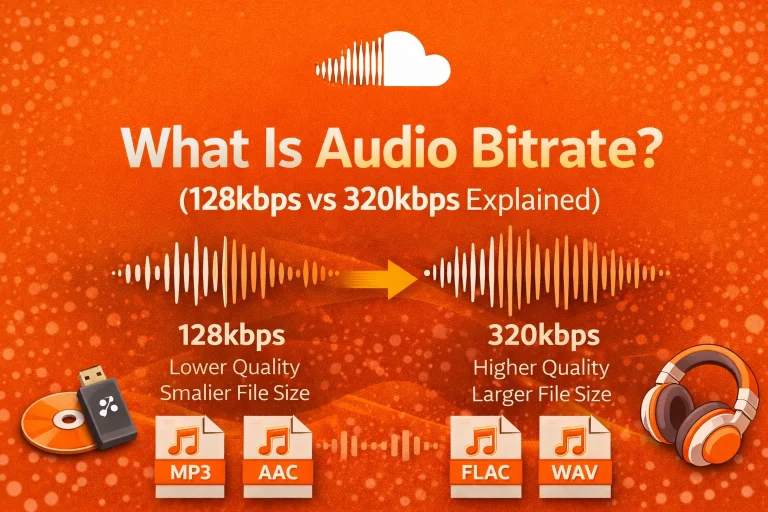

Audio Format Comparison

| Format | Type | Uses Psychoacoustics? | File Size Level |

| WAV | Uncompressed | No | Large |

| MP3 | Lossy | Yes | Small |

| AAC | Lossy | Yes | Smaller than MP3 |

| FLAC | Lossless | No | Medium |

From my perspective, lossy formats depend heavily on psychoacoustic models. In contrast, lossless formats preserve full audio data but require more storage space. This concept is clearly visible in how MP3 works, especially in its psychoacoustic modeling.

Common Problems

I have noticed that psychoacoustic models are not perfect. Over-compression can remove important details. This may result in flat or lifeless sound.

People also hear differently. Age and hearing health affect perception. However, most compression systems are built around average hearing ranges.

Low-quality headphones can distort the final experience. This sometimes changes the balance that the producer intended. It is common in mobile listening situations.

Practical Example

When I look at a music creator uploading a track to SoundCloud, I see psychoacoustics in action. A 50 MB WAV file can shrink to around 12 MB when encoded to MP3 at 320 kbps.

The encoder removes masked frequencies within critical bands. Most listeners cannot detect these removed elements. As a result, streaming becomes faster with little noticeable loss.

I also observe this in stereo imaging. Engineers use slight timing differences between the left and right channels. The brain interprets these differences as width and depth.

Expert Tips

- I recommend mixing at moderate volume levels to avoid masking mistakes

- I always test audio on headphones and mobile speakers

I avoid extreme compression settings. Instead, I balance clarity with file efficiency. Before uploading to streaming platforms, I export a high-quality master file.

Conclusion

From my experience and research, psychoacoustics in audio explains how we truly hear sound. It connects physical sound waves with brain interpretation. This knowledge supports modern compression, mixing, and streaming systems.

Whether I am analyzing music production or platforms like SoundCloud, I see psychoacoustic principles working behind the scenes. When applied carefully, they improve efficiency without harming listening quality. Understanding psychoacoustics helps me make smarter audio decisions, and it can help you do the same.

Frequently Asked Questions

1. Is psychoacoustics safe?

Yes. Based on my understanding, psychoacoustics is simply a scientific study of hearing. It does not cause harm. It explains how perception works.

2. Is it legal to use psychoacoustic compression?

Yes. Psychoacoustic compression is a standard method used across the audio industry. It is commonly applied in streaming and broadcasting systems.

3. Does psychoacoustics work on mobile devices?

Yes. I see it as essential for mobile streaming. Smaller files load faster and consume less data.

4. Why does audio sometimes sound bad after compression?

In my experience, this happens when compression settings are too aggressive. Important audio details may be removed. Low bitrate choices can reduce clarity.

5. What are the alternatives to psychoacoustic compression?

Uncompressed formats like WAV keep full data. Lossless formats such as FLAC reduce size without removing information. However, they use more storage than lossy formats.

6. Can psychoacoustics improve music production?

Yes. I use masking and loudness principles to improve clarity in mixes. Understanding perception helps create balanced and professional sound.